Protecsys 2 Suite : Premises and Employees Safety

Protecsys 2 Suite: in summary

Protecsys 2 Suite: a global security solution

Horoquartz's Protecsys 2 Suite solution offers advanced and integrated functionalities for better safety and security of your assets and employees.

- Access Control

- Video surveillance

- Autonomous locks

- Visitor management

- Intrusion detection

- Supervision

With Protecsys 2 Suite :

- Avoid intrusions and unauthorised access with a complete access control solution.

- Reinforce prevention and deterrence with video surveillance and intrusion detection solutions.

- Identify threats and crisis situations more easily with centralised and integrated supervision.

- Easily and cost-effectively secure a large number of doors with a wide range of stand-alone locks.

- Improve reception with a visitor management and visitor preparation module.

Reduce costs linked to insecurity

Protecsys 2 Suite ultimately helps you to reduce the costs related to insecurity, whether they are direct (damage to property and people, business interruption, data theft, etc.) or indirect (security, insurance and claims management costs), while also increasing employees' sense of security.

Adapting to the expected level of security

The expected level of safety depends largely on the customer's business and organisation. A Seveso site, an OIV (vitally important operator), an administration receiving the public, a sales outlet, a food-processing site may have different regulatory obligations. Protecsys 2 Suite adapts to the security policy of each company by offering the appropriate functionalities and modules.

Horoquartz: a unique positioning on the safety and security market

Horoquartz masters the full range of solutions implemented at customers' sites, combining software publishing, hardware design and manufacture and systems integration.

Horoquartz employs 530 people in 19 branches in France. In 2017, the company achieved a turnover of nearly 55 million euros and devotes more than 15% of its annual turnover to research and development.

Protecsys 2 Suite already equips more than 1,200 sites in France in all sectors of activity.

Its benefits

GDPR

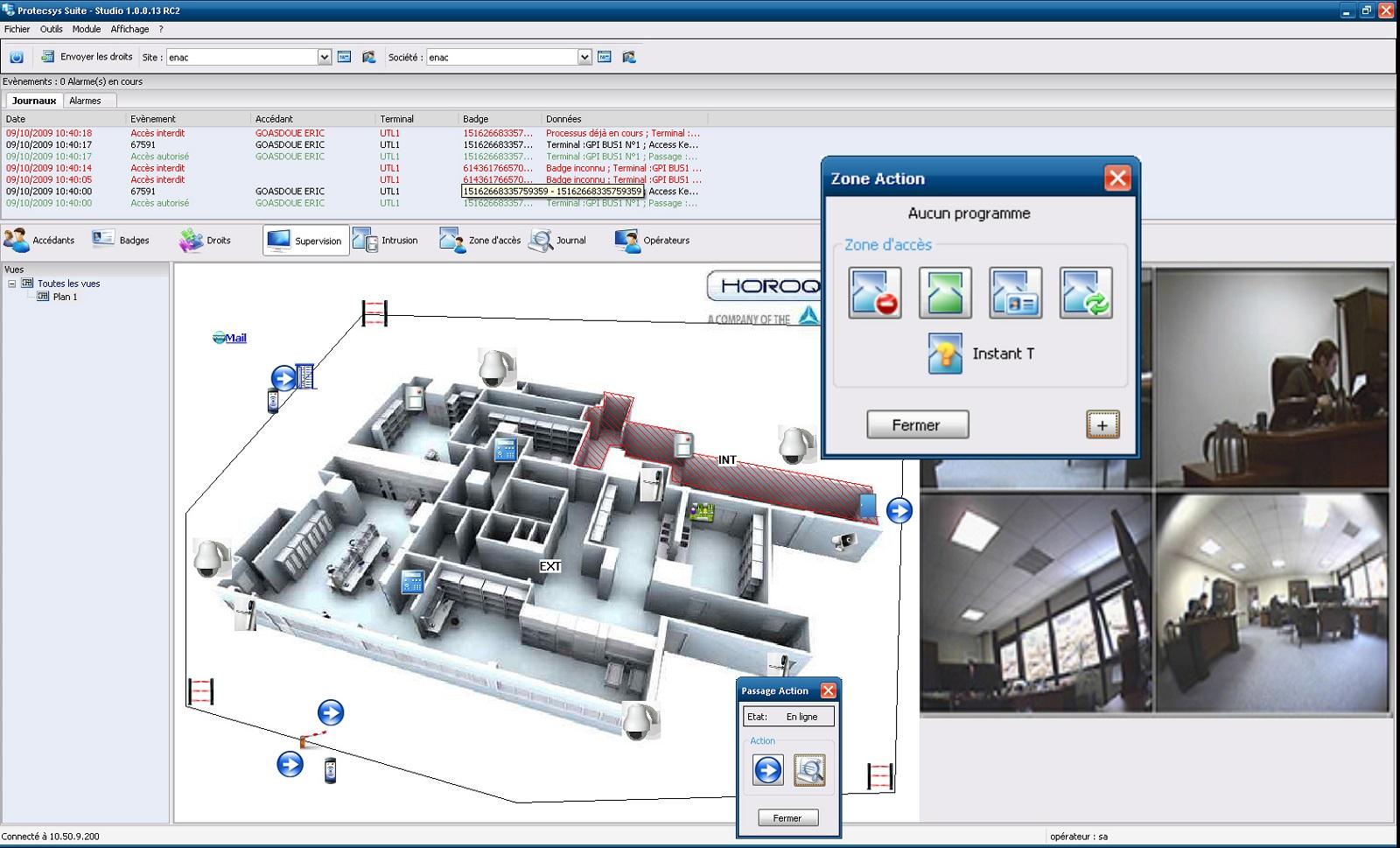

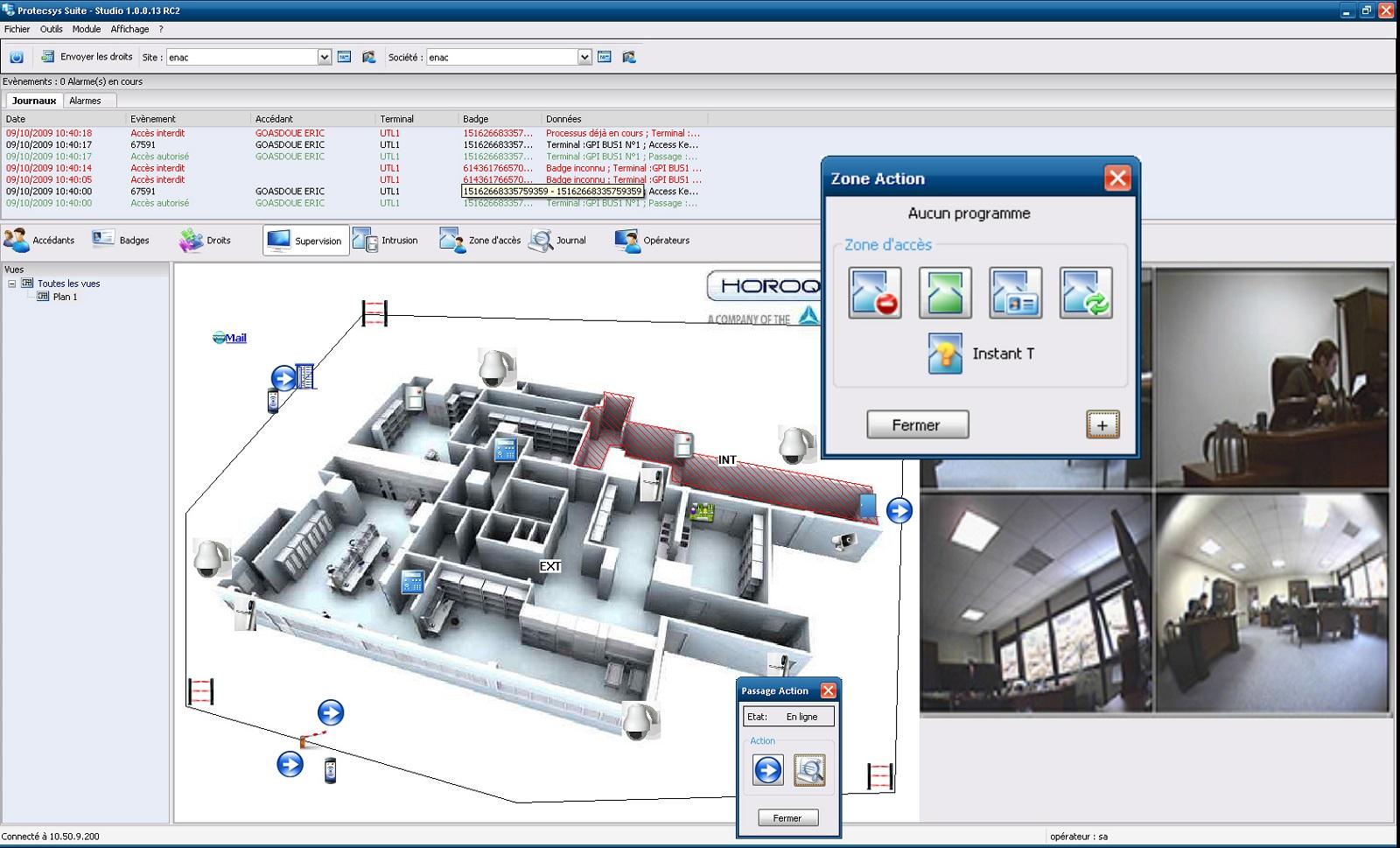

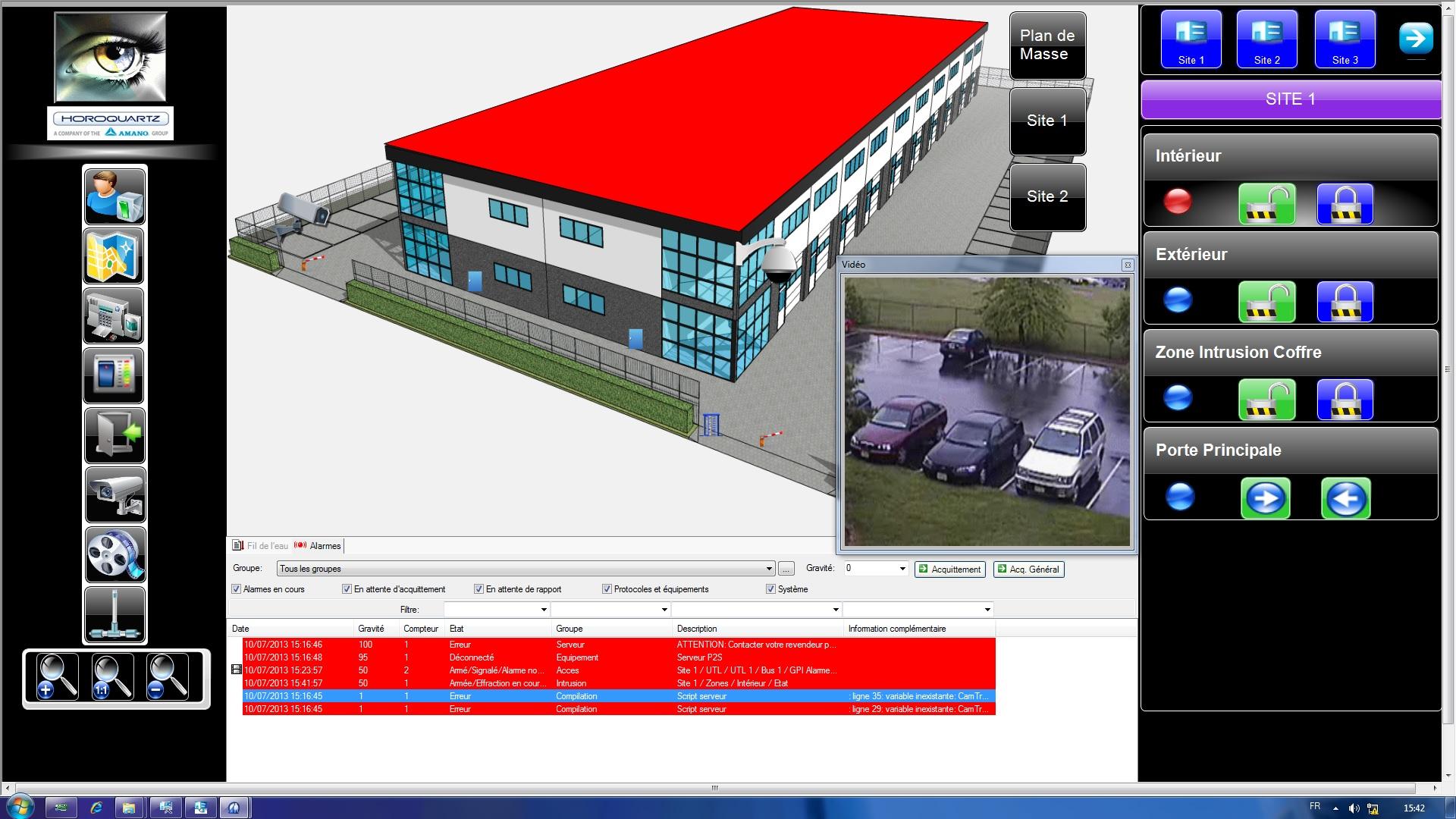

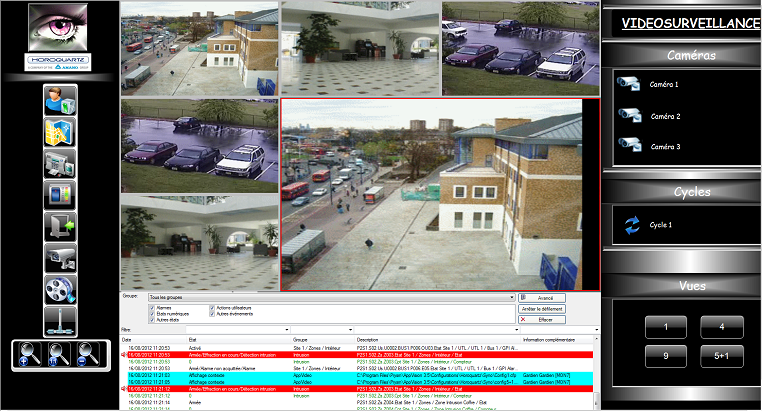

Protecsys 2 Suite - Screenshot 1

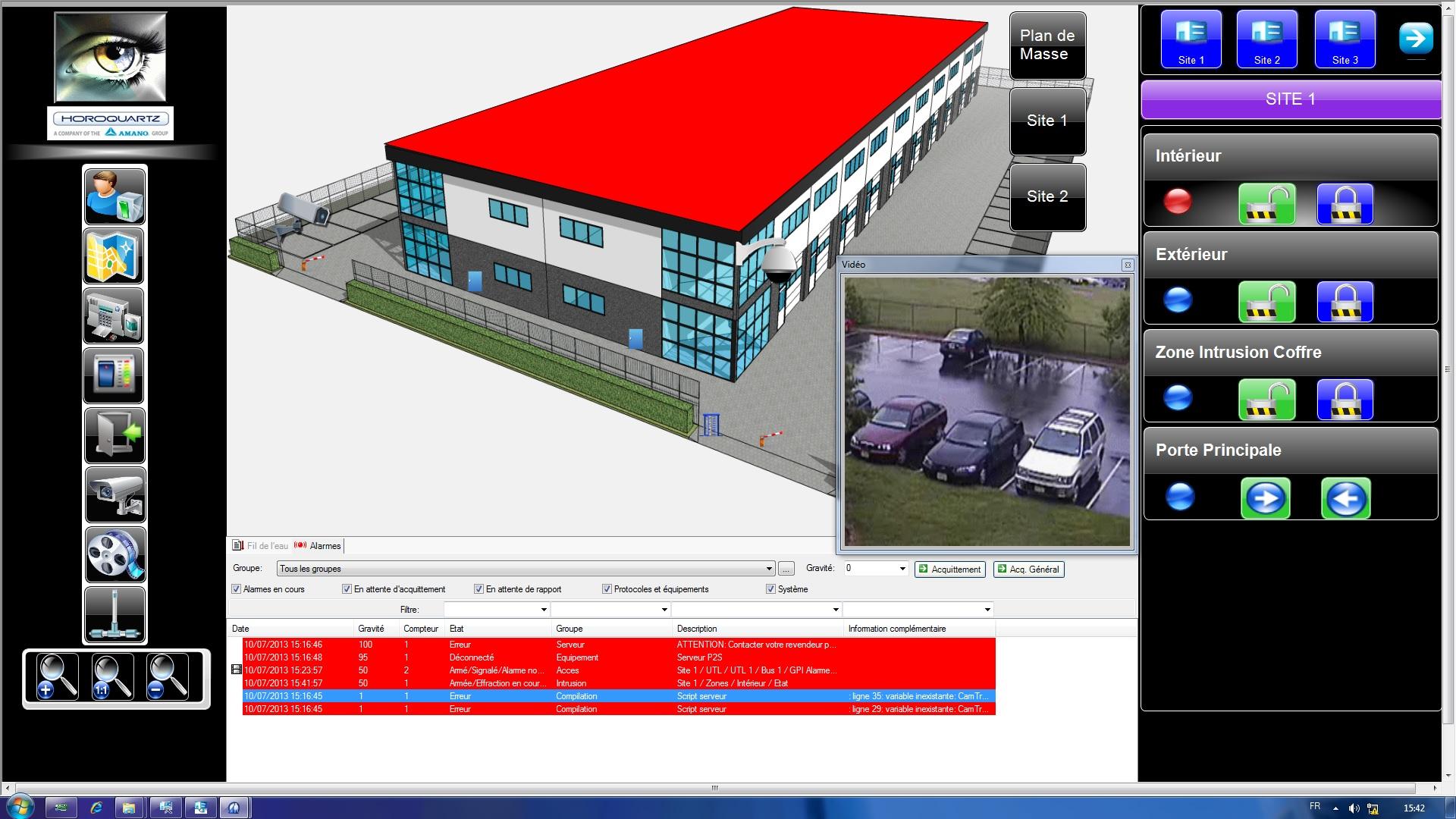

Protecsys 2 Suite - Screenshot 1  Protecsys 2 Suite - Screenshot 2

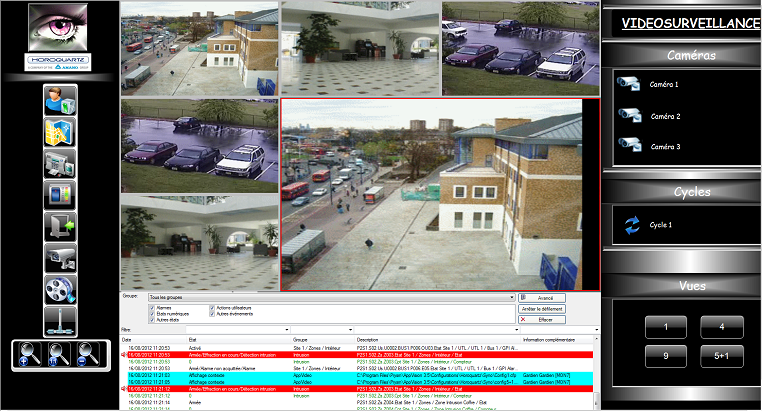

Protecsys 2 Suite - Screenshot 2  Protecsys 2 Suite - Screenshot 3

Protecsys 2 Suite - Screenshot 3  Protecsys 2 Suite - Screenshot 4

Protecsys 2 Suite - Screenshot 4  Protecsys 2 Suite - Screenshot 5

Protecsys 2 Suite - Screenshot 5

Protecsys 2 Suite: its rates

Standard

Rate

On demand

Clients alternatives to Protecsys 2 Suite

Streamline IT management with powerful software that simplifies Active Directory (AD) management, automates routine tasks, and provides real-time reporting.

See more details See less details

ManageEngine ADManager Plus offers a comprehensive solution for managing AD, enabling administrators to create, modify, and delete users, groups, and computers with ease. The software automates tasks such as password resets and group membership changes, reducing the time and effort required for routine tasks.

Read our analysis about ManageEngine ADManager Plus

Simplify identity and access management with comprehensive auditing and reporting tools.

See more details See less details

Keep track of user activities, monitor security events and identify potential threats with ease. Gain insights into user behaviour, set alerts and automate compliance reporting.

Read our analysis about ManageEngine ADAudit Plus

Securely store and manage all your passwords in one place with this user-friendly software.

See more details See less details

LockPass makes password management easy with its encrypted database, autofill feature, and password generator. Say goodbye to forgotten passwords and hello to peace of mind.

Read our analysis about LockPassBenefits of LockPass

A management console to manage users

Solution available in mobile application

Simple interface and quick deployment

Appvizer Community Reviews (0) The reviews left on Appvizer are verified by our team to ensure the authenticity of their submitters.

Write a review No reviews, be the first to submit yours.